Key Takeaways

- 01. Natural language processing gives ai chatbots the ability to understand human language beyond simple keyword matching and scripted responses.

- 02. Tokenization is a foundational NLP step that breaks your sentences into units ai chatbots can process, analyze, and interpret with precision.

- 03. Intent recognition models allow machine learning chatbots to identify what users truly want, even when phrased in unexpected or informal ways.

- 04. Sentiment analysis gives intelligent chatbots the capability to detect emotional tone and adjust their responses to match user mood in real time.

- 05. Context retention across multi-turn conversations is what separates advanced conversational AI platforms from basic rule based chatbot systems.

- 06. Multilingual NLP capabilities allow ai chatbots to serve users in India, UAE, and globally in their native language without switching platforms.

- 07. NLP algorithms help ai chatbots correct typos, decode slang, and understand abbreviated language commonly used in everyday messaging apps.

- 08. Machine learning chatbots improve with every interaction, using feedback loops to refine accuracy and deliver smarter automated customer support over time.

- 09. Voice enabled chatbots combine automatic speech recognition with NLP to process spoken language and deliver accurate real time language processing responses.

- 10. Businesses deploying NLP powered ai chatbots in customer service report higher engagement, faster resolution times, and improved user satisfaction scores.

With over eight years of experience building conversational interfaces for businesses across India and the UAE, we have seen first-hand how natural language processing has transformed what ai chatbots can do. In the early days, chatbots were rigid and frustrating. Today, they hold meaningful, productive conversations with thousands of customers simultaneously.

The shift happened because of NLP. Natural language processing is the core technology that lets an AI chat assistant move beyond matching keywords to actually understanding what a person means, needs, and feels when they type a message. This guide breaks down every layer of that process so you understand what is really happening when a chatbot responds intelligently to your query.

From tokenization and intent recognition to sentiment analysis and multilingual support, we will walk through how NLP powers every stage of a chatbot conversation in plain language, backed by years of practical implementation experience.

Natural language processing is a branch of artificial intelligence that gives machines the ability to read, understand, and generate human language. Without NLP, ai chatbots would be nothing more than lookup tables, returning fixed answers only when users type exact trigger phrases.

Human language is enormously complex. A single idea can be expressed in hundreds of different ways. People use informal phrasing, abbreviations, cultural references, sarcasm, and incomplete sentences. NLP algorithms are specifically trained to handle all of this variation so that intelligent chatbots can respond accurately regardless of how a message is worded.

For businesses in India and Dubai deploying automated customer support tools, NLP is not optional. It is the difference between a chatbot that handles real customer queries and one that confuses and frustrates users within seconds. AI powered assistants built on strong NLP foundations consistently outperform rule based alternatives in every measurable metric.

Language Understanding

NLP lets ai chatbots decode meaning, not just match words, enabling human language understanding at scale.

Real Time Processing

Real time language processing ensures users receive instant, context-aware replies without noticeable delays.

Global Scalability

Multilingual NLP allows conversational AI to serve markets in India, UAE, and beyond from a single platform.

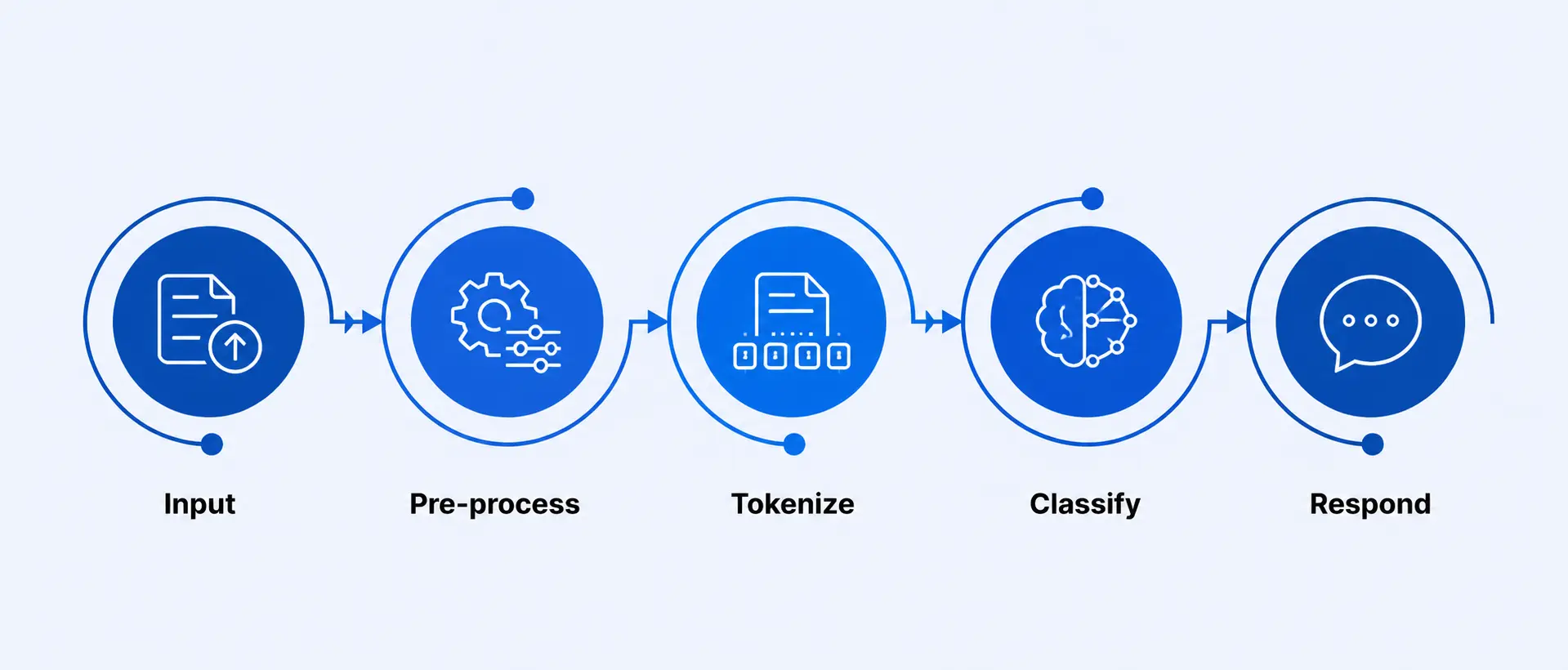

When you send a message to an ai chatbot, it does not simply read your words the way a human would. Instead, it runs your input through a structured pipeline of NLP stages, each designed to extract a specific type of information from your text.

The process typically begins with pre-processing, where the chatbot cleans your input by removing noise, normalising spelling, converting text to lowercase, and stripping out irrelevant characters. This step is crucial for voice enabled chatbots that must first convert spoken words into clean text before processing can begin.

After pre-processing, the chatbot moves through tokenization, part-of-speech tagging, named entity recognition, intent classification, and entity extraction. Each of these steps adds a layer of understanding, building a comprehensive picture of what you actually want.

Raw text or voice received

Clean and normalize text

Split into units

Detect intent and entities

Generate smart reply

Tokenization is one of the most essential early steps in how ai chatbots process language. It is the process of splitting a sentence or paragraph into smaller units called tokens. These tokens can be words, parts of words, or even individual characters depending on the model being used.

Consider the sentence “I want to book a flight to Dubai next Friday.” A tokenizer breaks this into individual tokens that the chatbot can analyze separately: “I”, “want”, “to”, “book”, “a”, “flight”, “to”, “Dubai”, “next”, “Friday”. The chatbot then knows to look for a destination, a date, and an action type.

Sub word tokenization, used in large language models, goes further by splitting rare or compound words into recognisable fragments. This is why NLP algorithms handle invented words, proper nouns from different languages, and technical jargon so effectively. For markets like India and the UAE with diverse vocabulary, sub word tokenization is particularly valuable in chatbot technology.

Recognizing meaning is far more difficult than recognizing words. The same word can mean different things in different contexts, and two completely different sentences can mean the same thing. This is the challenge of semantic understanding, and it is where machine learning chatbots genuinely shine.

AI powered assistants use word embedding models such as Word2Vec, GloVe, and transformer-based embeddings from architectures like BERT. These models represent every word as a mathematical vector in a high-dimensional space. Words with similar meanings end up close together in that space, allowing the chatbot to understand that “cheap flights” and “affordable fares” are asking for the same thing.

Named entity recognition (NER) adds another layer by identifying specific pieces of information within your message, such as names, locations, dates, and product names. Smart customer service bots use NER to extract actionable data from every message without requiring users to fill in forms or follow rigid input templates.

Intent recognition is the process by which ai chatbots figure out what a user is trying to accomplish. It is arguably the most commercially important NLP function because it directly determines whether the chatbot takes the right action or gives a completely unhelpful reply.

Intent classification models are trained on thousands of labelled examples for each intent category. A “track my order” intent might be triggered by “where is my parcel?”, “has my package shipped?”, “I haven’t received my order yet”, and dozens of similar variations. The more examples a model is trained on, the more accurately it can classify even unexpected phrasings.

Businesses using ai conversation tools for customer support in India and Dubai commonly train their bots on ten to fifty intent categories, covering everything from refund requests and product queries to escalation triggers and service appointments. Well-designed intent architecture is what transforms a basic chatbot into a genuinely useful conversational interface.

Sample Intent Recognition Confidence Scores

Real users do not type in perfect sentences. They use slang, make spelling errors, mix languages, and skip punctuation. Any ai chatbot that cannot handle this will fail in real-world deployment, no matter how sophisticated its backend logic might be.

Modern NLP algorithms address this through several mechanisms. Fuzzy matching allows chatbots to recognise words even when they are misspelled. Spell correction models, trained on common error patterns, silently fix typos before the rest of the pipeline even begins. Sub word tokenization handles abbreviations like “btw”, “asap”, and “lol” by recognising them from training data.

For markets like India where users frequently mix Hindi and English in a pattern called Hinglish, or UAE where Arabic-English code-switching is common, chatbot automation systems must be trained specifically on these hybrid language patterns. We have built several such systems for regional businesses, and the investment in language-specific training data consistently pays off in user satisfaction and containment rates.

A conversation is not a series of isolated questions. Each message builds on what came before it. When you ask an ai chatbot “What about tomorrow?” as a follow-up, the chatbot must know what topic you were discussing before it can give a sensible reply. This is what contextual understanding means in practice.

Conversational AI systems maintain what is called a context window or dialogue state. This is essentially a memory of the current conversation that the NLP model references when processing each new message. Without it, the chatbot would treat every message as a completely new conversation, making multi-step processes like booking, troubleshooting, or qualification completely impossible.

Advanced ai conversation tools also maintain entity state across turns. If you mentioned your account number three messages ago, the chatbot should still have that information available when it needs to look something up. This kind of memory management is a key differentiator between beginner chatbot technology and enterprise-grade virtual assistants used in banking, e-commerce, and healthcare.

Multi-Turn Context Example

Turn 1

“I need a hotel in Mumbai for 3 nights.”

Turn 2

“What about a sea-facing room?”

Turn 3

“Does it include breakfast?”

The ai chatbot retains “Mumbai”, “3 nights”, and “sea-facing” across all turns to provide coherent answers throughout the entire conversation flow.

One of the most powerful qualities of machine learning chatbots is their ability to improve over time. Unlike static rule based systems that can only do what they were explicitly programmed to do, NLP powered ai chatbots can be retrained and refined using real conversation data.

The learning loop works like this: conversations are logged and reviewed. Cases where the chatbot gave an incorrect or low-confidence response are flagged for analysis. NLP engineers and trainers then add those examples to the training dataset with correct labels. The model is retrained and redeployed with improved accuracy. In production deployments, this cycle can happen weekly or monthly.

Reinforcement learning from human feedback (RLHF) is an advanced variation where user ratings and corrections are directly used to fine-tune the model. This is the approach used by the most sophisticated AI powered assistants today, and it is why modern conversational AI platforms feel noticeably smarter after just a few months of active use in a production environment.

Understanding the distinction between these two categories is essential for any business evaluating chatbot automation options. The gap in capability is substantial, and it directly affects the business outcomes you can expect from your investment.

Rule based chatbots follow decision trees. Every possible conversation path must be manually written. If a user says something not covered by the rules, the chatbot fails. NLP powered ai chatbots use statistical models trained on language data and handle novel inputs gracefully. Here is a clear comparison:[1]

| Feature | Rule Based Chatbots | NLP Powered AI Chatbots |

|---|---|---|

| Language Flexibility | Fixed keywords only | Any phrasing, synonyms, slang |

| Intent Understanding | Explicit rule required | Learned from training data |

| Context Retention | Usually none | Full multi-turn memory |

| Typo Handling | Fails on misspellings | Fuzzy matching and correction |

| Learning Over Time | Manual updates only | Continuous model retraining |

| Multilingual Support | Separate rules per language | Unified multilingual NLP model |

| Sentiment Detection | Not available | Built-in sentiment analysis |

Sentiment analysis is an NLP capability that allows ai chatbots to determine the emotional tone behind a piece of text. It classifies messages as positive, negative, or neutral, and advanced models can detect more nuanced emotions like frustration, excitement, confusion, or urgency.

In automated customer support contexts, sentiment analysis is enormously valuable. When a smart customer service bot detects that a user is frustrated or has used language indicating distress, it can automatically escalate the conversation to a live human agent. This prevents the situation from worsening and demonstrates empathy, even in a fully automated system.

For e-commerce businesses in India and retail brands operating in Dubai, sentiment analysis also serves as a real-time feedback mechanism. By analysing thousands of chatbot conversations, businesses can identify patterns in customer sentiment around specific products, issues, or processes, and use that data to make operational improvements.

Positive Sentiment

Chatbot recognizes satisfaction, rewards users with upsell offers or loyalty prompts to maximize AI driven communication value.

Negative Sentiment

Chatbot detects frustration and triggers immediate escalation to a live agent, protecting customer experience and brand trust.

Neutral Sentiment

Chatbot continues normal conversational AI flow, staying focused on task completion and providing accurate helpful information.

The ability to communicate in a user’s native language is one of the most powerful things an ai chatbot can offer, particularly in diverse markets. India has over 22 officially recognised languages. The UAE serves users speaking Arabic, English, Hindi, Tagalog, and Urdu, often within the same customer base.

Multilingual NLP models are trained on data from multiple languages simultaneously. Modern transformer architectures like mBERT and XLM-RoBERTa can understand and generate text in over 100 languages with shared model weights. This means a single chatbot model can serve users across language groups without requiring separate builds for each language.

Language detection is usually the first step in a multilingual pipeline. The ai chatbot identifies which language the user is writing in and then routes their message through the appropriate language-specific NLP layer. For businesses expanding across South Asia and the Middle East, multilingual intelligent chatbots are not a premium feature but a baseline requirement for genuine market reach.

Languages Supported by Leading Multilingual NLP Models

The ultimate goal of NLP in ai chatbots is not just accuracy but naturalness. When a chatbot sounds robotic or formal in the wrong context, users disengage quickly. Natural language generation (NLG), the output side of NLP, is what determines how responses are phrased and how they feel to the person reading them.

Modern AI driven communication models can vary sentence structure, adjust formality based on context, mirror the user’s tone, and generate responses that feel genuinely conversational rather than scripted. This is powered by large language models trained on billions of words of real human dialogue, enabling them to produce outputs that pass a natural language test in most everyday interactions.

Personalisation is another dimension here. When a virtual assistant addresses you by name, references your past interactions, and adjusts its communication style to your preferences, it creates a relationship dynamic that users find genuinely satisfying. For smart customer service bots deployed in banking and retail, this level of personalisation directly impacts loyalty and retention metrics in measurable ways.

The most human-feeling ai chatbots also know when not to respond with information. They acknowledge confusion, ask clarifying questions, admit uncertainty, and hand off gracefully to human agents. These behaviours, all governed by NLP logic and policy layers, are what distinguishes a genuinely intelligent chatbot from one that only appears smart in ideal conditions.

Tone Matching

AI chatbots mirror the user’s communication style, becoming formal or casual as the context demands.

Clarifying Questions

When unclear, intelligent chatbots ask follow-up questions rather than guessing and giving wrong answers.

Sentence Variation

NLG models avoid repetitive phrasing and vary sentence structures to feel genuinely conversational.

Personalisation

Virtual assistants use past interaction data to personalise greetings, recommendations, and response content.

Graceful Handoff

NLP policy layers detect complex issues and hand off to human agents smoothly, maintaining conversation continuity.

Contextual Memory

Chatbot automation remembers what was said earlier and weaves it naturally into later responses in the session.

Ready to Deploy NLP Powered AI Chatbots for Your Business?

From intent recognition to multilingual support, we build ai chatbots that truly understand your customers and drive measurable results.

Frequently Asked Questions About AI Chatbots

When you type a message, ai chatbots run it through layers of natural language processing to read your words, understand your intent, and form a relevant response that matches what you actually needed.

AI chatbots may struggle with highly ambiguous phrases, regional slang, or very complex multi-part questions. Their NLP algorithms need enough training data to handle every unique language pattern users bring to a conversation.

AI chatbots use intent recognition models trained on thousands of real user inputs. They go beyond keywords and map the overall meaning of your message to known categories like booking, complaining, or asking for information.

Yes. Modern ai chatbots use sentiment analysis to detect emotional tone. By reading your word choices and phrasing patterns, they can identify frustration, satisfaction, or confusion and adjust their replies accordingly.

Many ai chatbots continuously learn through machine learning feedback loops. Each conversation helps improve their NLP models over time, making responses more accurate, relevant, and natural with regular use and retraining cycles.

Basic chatbots follow rigid rules and keyword matching. NLP powered ai chatbots understand language contextually, handle variations in phrasing, and respond intelligently even when queries are worded in unexpected or imperfect ways.

Many advanced ai chatbots now support multilingual natural language processing. They can understand and respond in Hindi, Arabic, and other languages, making them highly useful for businesses operating across India and the UAE.

AI chatbots use context windows and conversation history tracking to remember earlier messages in a session. This contextual understanding allows them to give coherent, connected answers across multi-turn conversations without losing the thread.

Enterprise ai chatbots built with proper encryption, access controls, and compliance standards are safe for sensitive queries. Businesses in India and Dubai rely on such intelligent chatbots for secure automated customer support every day.

NLP enables ai chatbots to use natural sentence structures, vary phrasing, and match conversational tone. Instead of stiff scripted replies, they generate fluid human like responses using language generation models trained on real dialogue.

Author

Aman Vaths

Founder of Nadcab Labs

Aman Vaths is the Founder & CTO of Nadcab Labs, a global digital engineering company delivering enterprise-grade solutions across AI, Web3, Blockchain, Big Data, Cloud, Cybersecurity, and Modern Application Development. With deep technical leadership and product innovation experience, Aman has positioned Nadcab Labs as one of the most advanced engineering companies driving the next era of intelligent, secure, and scalable software systems. Under his leadership, Nadcab Labs has built 2,000+ global projects across sectors including fintech, banking, healthcare, real estate, logistics, gaming, manufacturing, and next-generation DePIN networks. Aman’s strength lies in architecting high-performance systems, end-to-end platform engineering, and designing enterprise solutions that operate at global scale.