Key Takeaways

- The legal regulations of AI Copilot in India are governed by the DPDP Act 2023, IT Act 2000, and sector-specific guidelines from RBI, SEBI, and IRDAI for regulated industries.

- The Digital Personal Data Protection Act 2023 is the most significant legal regulation directly impacting AI Copilot systems that process, store, or transmit personal data of Indian citizens.

- AI Copilot systems processing sensitive personal data including financial records, health information, and biometric data face stricter consent and localization requirements under Indian law.

- Indian enterprises must designate a Data Protection Officer and establish grievance redressal mechanisms when AI Copilot systems are classified as significant data fiduciaries under DPDP rules.

- Cross-border data transfer restrictions under India’s emerging data regulations require AI Copilot platforms hosted outside India to meet prescribed adequacy standards before processing Indian citizen data.

- The IT Act 2000 and its amendments impose cybersecurity obligations on organizations that deploy AI Copilot systems with access to sensitive or confidential digital information assets.

- Transparency and explainability requirements are emerging as core legal regulations of AI Copilot governance, requiring organizations to document how AI systems make recommendations affecting individuals.

- AI Copilot systems used in high-stakes domains like credit decisioning, healthcare diagnosis, and hiring are subject to heightened accountability standards and potential regulatory pre-approval requirements in India.

- Non-compliance with legal regulations of AI Copilot in India can result in penalties up to 250 crore rupees under DPDP rules, plus sector-specific sanctions from industry regulators.

- Enterprises that embed legal compliance into AI Copilot architecture from the beginning face significantly lower remediation costs and regulatory risk than those that retrofit compliance afterward.

What are the Legal Regulations?

Legal regulations governing AI Copilot systems are the statutory laws, regulatory guidelines, and compliance frameworks that determine how AI-powered assistant tools may lawfully process data, interact with users, make recommendations, and integrate with business systems in a given jurisdiction. These regulations establish the boundaries within which AI Copilot systems must operate, the rights that individuals retain over their data used by these systems, and the obligations that organizations deploying such systems must fulfil.

India’s technology landscape is evolving faster than its regulatory frameworks, and nowhere is this tension more visible than in the rapidly expanding deployment of artificial intelligence Copilot systems across Indian enterprises. From Bengaluru’s technology sector and Mumbai’s financial institutions to Delhi’s government-adjacent enterprises and Hyderabad’s pharmaceutical giants, organizations are integrating AI Copilot tools into sensitive workflows at a pace that is significantly outrunning their compliance readiness.

In the global context, the legal regulations of AI Copilot differ significantly across markets. The European Union has taken the most prescriptive approach with its EU AI Act, classifying AI systems by risk level and imposing corresponding compliance obligations. The United States follows a more sector-specific and principles-based approach, with agencies like the FTC, SEC, and FDA issuing guidance relevant to their respective domains. The UAE has adopted a proactive AI governance stance through the UAE AI Strategy and sector-specific regulatory guidance from the ADGM and DIFC.

India’s approach is distinct from all three. Rather than a single comprehensive AI regulation, the legal regulations of AI Copilot in India emerge from the intersection of multiple legislative frameworks that were not originally designed with AI in mind but that apply to AI Copilot systems through their scope and intent. Understanding which laws apply, how they interact, and what specific obligations they create for AI Copilot deployments is the essential first step for any Indian enterprise operating in this space.

Key Legal Regulations of AI Copilot in India

The legal regulations of AI Copilot in India are drawn from several legislative and regulatory sources. Each creates distinct obligations for organizations that deploy, operate, or provide AI Copilot systems. A thorough understanding of all applicable frameworks is essential for building a defensible compliance position.

The legal regulations of AI Copilot in India are not yet consolidated into a single comprehensive AI law, but that does not mean the regulatory environment is permissive or without teeth. Multiple existing laws, recent legislative enactments, and emerging regulatory guidelines collectively create a compliance framework that AI Copilot deployments must navigate carefully. Failure to understand and comply with these requirements carries real legal, financial, and reputational consequences.

As an agency with over eight years of experience building and deploying AI-powered systems for enterprises across India, the UAE, and the US, we have guided organizations through the complex intersection of AI capability and legal compliance across every major jurisdiction. The legal regulations of AI Copilot in India are evolving rapidly, and organizations that build compliance into their AI Copilot architecture from day one are those that scale confidently without regulatory disruption.

Digital Personal Data Protection Act 2023 (DPDP Act)

The DPDP Act is the most directly applicable legal regulation for AI Copilot systems in India. It governs the collection, processing, storage, and transfer of personal data of Indian citizens by any entity, including AI Copilot systems. It introduces the concept of Data Fiduciaries, who determine the purpose and means of data processing, and Data Principals, the individuals whose data is processed. Any AI Copilot that processes personal data of Indian users is subject to this Act.

Information Technology Act 2000 and IT (Amendment) Act 2008

The IT Act and its amendments establish the legal framework for cybersecurity obligations in India. Section 43A imposes a duty of reasonable security practices on entities handling sensitive personal data or information (SPDI). AI Copilot systems with access to financial records, health data, passwords, and biometric information must comply with the Information Technology (Reasonable Security Practices) Rules 2011, which prescribe specific technical and organizational security measures.

Sector-Specific Regulatory Guidelines

Beyond the foundational legislation, sector regulators in India have issued guidance directly relevant to AI Copilot compliance. The Reserve Bank of India (RBI) has issued guidelines on technology and AI use by regulated financial entities. The Securities and Exchange Board of India (SEBI) has addressed algorithmic decision-making in capital markets. The Insurance Regulatory Authority of India (IRDAI) has issued frameworks for technology-based advisory systems in the insurance sector. Each framework creates specific obligations for AI Copilot systems operating in these regulated domains.

National Data Governance Framework Policy

India’s National Data Governance Framework Policy, in conjunction with the emerging India AI Mission, is shaping the governance standards that AI Copilot deployments will need to meet in the near term. These frameworks address data sharing, AI transparency, accountability for AI-driven decisions, and the government’s expectations for responsible AI deployment across both public and private sector organizations.

Data Privacy Laws for AI Copilot in India

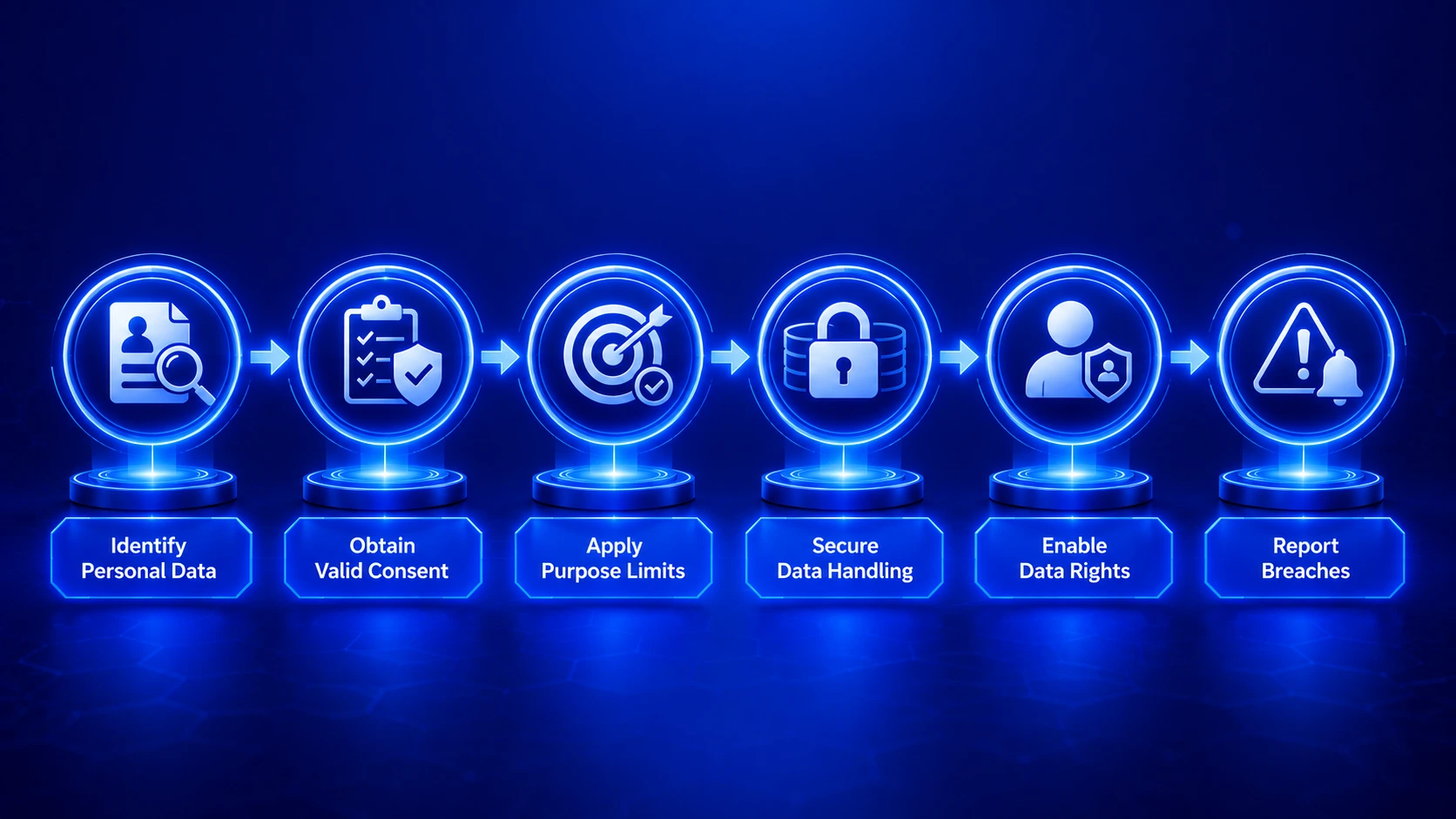

Data privacy is at the core of the legal regulations of AI Copilot in India, and the DPDP Act 2023 establishes the most comprehensive set of data privacy obligations that AI Copilot deployments must meet. These obligations go beyond simple data security controls and address the entire lifecycle of personal data from collection through deletion.

The consent requirement under the DPDP Act is particularly significant for AI Copilot systems. Before processing personal data, organizations must obtain free, specific, informed, unconditional, and unambiguous consent from data principals. For an AI Copilot that processes employee data to provide HR assistance, or customer data to support sales interactions, this means establishing clear consent mechanisms that explain what data is being processed, for what purpose, and how long it will be retained.

The purpose limitation principle requires that personal data collected for one purpose cannot be used by an AI Copilot for a different purpose without obtaining fresh consent. This has direct implications for AI Copilot systems that process data across multiple business functions. An AI Copilot trained on customer service interaction data cannot lawfully use that data to make credit decisions without separate consent for that purpose, even if the AI system architecture would technically allow it.

Data retention limitations are another critical compliance element under Indian data privacy law. The DPDP Act requires that personal data be retained only for as long as necessary for the stated purpose. For AI Copilot systems that continuously process and log user interactions, this means establishing and enforcing automated data retention and deletion policies that prevent indefinite storage of personal data in conversation logs, retrieved context, and interaction histories.

AI Copilot Compliance Under Data Protection Laws in India

Achieving compliance with the data protection laws that govern the legal regulations of AI Copilot in India requires organizations to meet specific operational and technical requirements across multiple dimensions. These are not aspirational standards but enforceable obligations with defined consequences for non-compliance.

AI Copilot Data Protection Compliance Requirements Under Indian Law

| Compliance Requirement | Applicable Law | AI Copilot Obligation | Non-Compliance Penalty |

|---|---|---|---|

| Informed Consent | DPDP Act 2023 | Obtain explicit consent before processing personal data through AI Copilot | Up to Rs 200 crore |

| Data Minimization | DPDP Act 2023 | AI Copilot must access only data necessary for the stated processing purpose | Up to Rs 150 crore |

| Security Safeguards | IT Act S. 43A and IT Rules 2011 | Implement reasonable security practices for all SPDI processed by AI Copilot | Compensation liability |

| Data Principal Rights | DPDP Act 2023 | Enable users to access, correct, and erase data processed by AI Copilot | Up to Rs 250 crore |

| Breach Notification | DPDP Act 2023 | Report AI Copilot-related data breaches to the Data Protection Board promptly | Up to Rs 200 crore |

| Cross-Border Transfer | DPDP Act 2023 | Ensure AI Copilot platforms receiving Indian personal data meet prescribed standards | Up to Rs 250 crore |

Organizations classified as Significant Data Fiduciaries under the DPDP Act face additional compliance obligations beyond those applicable to standard data fiduciaries. These include mandatory appointment of a Data Protection Officer based in India, conducting Data Protection Impact Assessments for high-risk AI processing activities, and implementing comprehensive algorithmic auditing mechanisms that allow regulators to assess how AI Copilot systems make decisions affecting data principals.

Security and Access Control Under Legal Regulations of AI Copilot

The security obligations embedded in the legal regulations of AI Copilot in India are among the most operationally demanding compliance requirements for enterprises. The IT (Reasonable Security Practices and Procedures and Sensitive Personal Data or Information) Rules 2011 prescribe a set of security controls that organizations handling sensitive data through AI Copilot systems must implement and maintain.

These rules require organizations to have a documented information security policy, implement ISO 27001 or equivalent security standards, conduct regular security audits, establish incident response procedures, and maintain audit logs of data access and processing activities. For an AI Copilot system that touches sensitive personal data, each of these requirements translates into specific architectural and operational controls that must be in place before and during operation.

Access control under the legal regulations of AI Copilot in India operates at two levels. At the system level, the AI Copilot infrastructure must implement role-based access controls that ensure employees can only access and query data relevant to their function. At the output level, the AI Copilot must be configured to prevent responses that aggregate or synthesize data beyond what a user is authorized to see, even when each individual source falls within their access permissions.

Encryption Requirements

All personal data processed, transmitted, or stored by the AI Copilot system must be encrypted at rest and in transit. This applies to conversation logs, retrieved context chunks, user query data, and any personal data included in the knowledge base.

Audit Trail Maintenance

Legal regulations of AI Copilot require maintaining comprehensive audit logs of who accessed what data, when, and through which AI interaction. These logs must be tamper-evident and retained for the regulatory-prescribed minimum period to support compliance audits and incident investigations.

Third-Party Vendor Assessment

When an AI Copilot system uses third-party infrastructure, model providers, or cloud services, organizations remain legally responsible for ensuring those vendors meet the security standards required by Indian law. Formal data processing agreements with all AI Copilot vendors are mandatory.

Vulnerability Assessment

Periodic vulnerability assessments and penetration testing of AI Copilot systems are required under reasonable security practice standards. These assessments must specifically test AI-specific attack vectors including prompt injection, data extraction through manipulation, and access control bypass attempts.

Incident Response Protocol

A documented incident response plan specifically addressing AI Copilot security incidents is required. This plan must define roles, notification timelines, containment procedures, and evidence preservation processes consistent with DPDP breach notification obligations and CERT-In reporting requirements.

Data Localization Controls

For categories of data subject to localization requirements under sectoral regulations, the AI Copilot infrastructure must ensure that data processing and storage occurs within India. This affects AI Copilot systems used by banks, financial institutions, and payment system operators specifically under RBI guidelines.

How India Governs Legal Regulations of AI Copilot Standards?

India’s governance approach for legal regulations of AI Copilot in evolving from a reactive, legislation-after-harm model toward a more proactive, principles-based regulatory framework. Several institutional mechanisms are shaping how the legal regulations of AI Copilot are enforced and developed in the Indian context.

The Data Protection Board of India, established under the DPDP Act, is the primary enforcement authority for data-related AI Copilot violations. The Board has the power to investigate complaints, conduct inquiries, impose penalties, and issue binding compliance directions. For AI Copilot deployments involving personal data, the Board’s actions will be the most significant regulatory risk to monitor.

The Ministry of Electronics and Information Technology (MeitY) plays a central role in shaping AI governance standards through policy initiatives, advisory frameworks, and the India AI Mission. MeitY’s approach has been to encourage responsible AI adoption while building the regulatory capacity needed to address harms as the technology matures. The India AI initiative launched under this ministry is creating technical standards and governance frameworks that will progressively formalize the legal regulations of AI Copilot compliance requirements.

Sector regulators including RBI, SEBI, IRDAI, and TRAI each maintain jurisdiction over AI Copilot systems within their respective sectors and are actively developing sector-specific guidance. [1] This multi-regulator landscape means that enterprises in regulated sectors may face AI Copilot legal regulations from multiple authorities simultaneously, each with distinct requirements and enforcement mechanisms.

Legal Concerns in AI Copilot Data Processing in India

The data processing activities performed by AI Copilot systems raise specific legal concerns that go beyond general data protection compliance. These concerns arise from the unique ways in which AI Copilot systems interact with data: synthesizing multiple sources, generating new information, making probabilistic inferences, and potentially retaining information in ways that are difficult to audit or control through traditional data governance mechanisms.

The first major legal concern is automated decision-making liability. When an AI Copilot system makes or significantly influences a decision affecting an individual, such as a credit assessment, a hiring recommendation, or a healthcare guidance output, questions of legal liability become complex. Indian law does not yet have comprehensive provisions addressing automated decision-making accountability specifically for AI systems, but existing tort law, contract law, and sector-specific regulations collectively create a liability framework that organizations must navigate carefully.

The second concern is intellectual property rights in AI outputs. When an AI Copilot generates content based on an enterprise’s proprietary knowledge base, questions arise about who owns that generated content, whether the training or retrieval process creates copyright issues with respect to source documents, and what obligations organizations have to disclose AI involvement in content creation under applicable Indian law.

The third concern is the admissibility of AI Copilot outputs as evidence in legal proceedings. If an AI Copilot system’s output is used as the basis for a contractual decision, a regulatory filing, or an internal investigation, the evidential value and reliability of that output may be challenged in court or regulatory proceedings. The Indian Evidence Act and its digital evidence provisions apply, but the specific treatment of AI-generated outputs remains an evolving area of judicial interpretation.

Challenges in Implementing Legal Regulations of AI Copilot Compliance

Understanding the legal regulations of AI Copilot in India is the first step; implementing compliance in practice is where most organizations encounter significant challenges. Based on our work with enterprises across India’s major technology, financial, and industrial centers, we consistently observe the same categories of implementation difficulty.

AI Copilot systems interact with data in complex, non-linear ways that do not map cleanly onto traditional data flow diagrams. Tracking exactly which personal data the AI Copilot accesses during each query, how that data is processed internally, and where it is temporarily stored in context windows and caches requires specialized technical expertise that most legal and compliance teams do not have internally.

The legal regulations of AI Copilot in India are not fully codified, and multiple laws interact in ways that create compliance uncertainty. Organizations must make good-faith judgments about how existing laws apply to novel AI behaviours, sometimes without regulatory guidance or precedent to reference. This ambiguity creates legal risk that cannot be fully eliminated, only managed through documented, reasonable interpretation and implementation.

Enterprises operating in banking, insurance, or capital markets face AI Copilot legal regulations from multiple authorities simultaneously. Complying with RBI guidance on AI use while also meeting DPDP obligations and potentially SEBI requirements for certain data types requires careful coordination between legal, compliance, and technology teams that many organizations lack the internal capacity to execute effectively.

Many Indian enterprises use AI Copilot platforms whose infrastructure is hosted outside India. Ensuring these platforms meet India’s data localization requirements, obtaining appropriate contractual protections, and verifying that cross-border transfers comply with DPDP permitted grounds requires ongoing due diligence that is frequently under-resourced in practice.

What are the Legal Regulations of AI Copilot for Indian Businesses?

For Indian businesses evaluating or operating AI Copilot systems, the legal regulations translate into a concrete set of operational requirements that must be addressed as a standard part of any AI Copilot deployment. These are not merely legal formalities but genuine operational obligations that affect how systems are built, how data is handled, and how accountability is maintained.

Sector-Specific Legal regulations of AI Copilot Requirements in India

| Industry Sector | Primary Regulator | Key AI Copilot Obligations | Special Requirements |

|---|---|---|---|

| Banking and Finance | RBI + DPDP | Data localization, audit trails, explainable AI outputs | Financial data must be stored within India |

| Healthcare | NHA + DPDP | Sensitive health data protections, consent for diagnostic AI | Health data classified as sensitive personal data |

| Capital Markets | SEBI + DPDP | Algorithmic decision accountability, investor data protection | Algo trading AI Copilot requires SEBI registration |

| Insurance | IRDAI + DPDP | Underwriting AI transparency, customer consent obligations | AI-driven decisions must be explainable to policyholders |

| Technology and SaaS | MeitY + DPDP | Data processing agreements, security audits, user consent | Significant data fiduciary obligations above threshold |

| E-Commerce and Retail | DPDP + Consumer Protection | Customer data minimization, grievance officer designation | AI personalization must disclose automated profiling |

For technology companies building AI Copilot platforms for Indian business clients, the legal regulations of AI Copilot create both obligations and opportunities. Platforms that are designed with DPDP compliance built in from the architecture level, that offer India-hosted deployment options, that provide comprehensive consent management and data rights tooling, and that maintain CERT-In compliant security frameworks will have a significant competitive advantage in the Indian enterprise market as regulatory enforcement matures.

Legal Regulations of AI Copilot in India Compliance

Building a legally compliant AI Copilot deployment in India requires a structured approach that addresses regulatory requirements at every stage from initial design through ongoing operation. Based on our extensive experience supporting enterprises across India’s major markets, a compliance-first AI Copilot strategy consistently produces better outcomes than reactive compliance retrofitting.

AI Copilot Legal Compliance Readiness: Key Control Areas

The practical compliance pathway for Indian enterprises begins with a legal and technical assessment that maps all personal data flows within the proposed AI Copilot architecture to the applicable legal regulations of AI Copilot. This assessment identifies compliance gaps, prioritizes remediation based on regulatory risk, and produces a documented compliance position that can be presented to regulators or legal counsel if required.

Ongoing compliance requires treating the legal regulations of AI Copilot not as a one-time certification but as a continuous operational commitment. The regulatory landscape in India is evolving rapidly, with new rules and guidance emerging from multiple authorities on a regular basis. Organizations that build compliance monitoring into their AI Copilot governance structure, rather than treating it as an annual audit exercise, are consistently better positioned to adapt to regulatory changes without operational disruption.

Investing in compliance-ready AI Copilot architecture from the outset costs a fraction of the remediation expenses, regulatory penalties, and reputational damage that non-compliant deployments generate once enforcement actions begin. For enterprises across India’s growing AI Copilot market, compliance is not a constraint on innovation; it is the foundation of sustainable, trustworthy AI-powered operations that customers, regulators, and stakeholders can rely upon.

Legal Compliance is the Foundation of Trustworthy AI Copilot in India

The legal regulations of AI Copilot in India are complex, multi-layered, and actively evolving. The DPDP Act 2023, IT Act provisions, sector-specific regulatory frameworks, and emerging AI governance standards collectively create a compliance environment that demands deliberate, informed attention from every organization deploying AI Copilot systems in the Indian market.

The enterprises that will build lasting competitive advantage from AI Copilot technology are those that treat legal compliance not as a brake on deployment but as an enabler of trust. A legally compliant AI Copilot system earns the confidence of customers, satisfies the scrutiny of regulators, and provides the governance foundation that allows confident scaling across India’s diverse and rapidly growing enterprise markets.

From Bengaluru’s technology sector to Mumbai’s financial institutions and beyond, the organizations that embed the legal regulations of AI Copilot into their architecture from day one are the ones writing the next chapter of India’s AI-powered business transformation with confidence and integrity.

Build a Legally Compliant AI Copilot for India

We design DPDP-ready, security-first AI Copilot systems for Indian enterprises. Architecture, compliance documentation, and ongoing regulatory support included.

Frequently Asked Questions

The main legal regulations of AI Copilot in India include the Digital Personal Data Protection Act 2023, the IT Act 2000 with its amendments, and sector-specific compliance guidelines issued by regulators such as RBI, SEBI, and IRDAI. Together, these laws define how AI Copilot systems must operate legally in India.

Yes. Under the legal regulations of AI Copilot, any tool that collects, stores, processes, or transfers personal data of Indian citizens must comply with the DPDP Act 2023. Organizations using AI Copilot solutions are treated as Data Fiduciaries and must follow obligations related to consent, security, and data rights.

Yes. According to the legal regulations of AI Copilot, businesses must obtain free, informed, specific, and unambiguous consent before processing personal data through AI systems. This applies to employee records, customer databases, and other personal information handled by AI Copilot tools.

It depends on the sector and data category. The legal regulations of AI Copilot allow certain cross-border transfers under DPDP rules, but industries like banking may require strict data localization. Businesses must also ensure contractual safeguards and compliance with approved jurisdictions.

Violations under the legal regulations of AI Copilot can attract penalties of up to Rs 250 crore under the DPDP Act 2023. Additional fines, restrictions, or operational actions may also be imposed by sector regulators such as RBI or SEBI.

Under the legal regulations of AI Copilot, organizations handling large volumes of sensitive or high-risk personal data may be classified as Significant Data Fiduciaries. These entities must appoint a Data Protection Officer, conduct DPIAs, and maintain stronger governance controls.

The legal regulations of AI Copilot require organizations to report breaches to the Data Protection Board of India within prescribed timelines. In cybersecurity incidents, CERT-In reporting obligations may also apply, along with internal incident response procedures.

Yes. The legal regulations of AI Copilot are stricter in regulated sectors like banking and healthcare. RBI-regulated entities must meet localization and technology risk rules, while healthcare systems must comply with health data privacy and consent standards

To meet the legal regulations of AI Copilot, organizations should implement encryption, access controls, audit trails, security policies, periodic penetration testing, and compliance with standards such as ISO 27001 when handling sensitive data.

The legal regulations of AI Copilot should be monitored continuously. Organizations are advised to conduct quarterly compliance reviews, annual audits, and immediate legal assessments whenever AI Copilot workflows or data processing activities change.

Author

Aman Vaths

Founder of Nadcab Labs

Aman Vaths is the Founder & CTO of Nadcab Labs, a global digital engineering company delivering enterprise-grade solutions across AI, Web3, Blockchain, Big Data, Cloud, Cybersecurity, and Modern Application Development. With deep technical leadership and product innovation experience, Aman has positioned Nadcab Labs as one of the most advanced engineering companies driving the next era of intelligent, secure, and scalable software systems. Under his leadership, Nadcab Labs has built 2,000+ global projects across sectors including fintech, banking, healthcare, real estate, logistics, gaming, manufacturing, and next-generation DePIN networks. Aman’s strength lies in architecting high-performance systems, end-to-end platform engineering, and designing enterprise solutions that operate at global scale.